Scripted with A.I.

Set the percentage based on measurable facts about how many lines were altered or created with A.I.

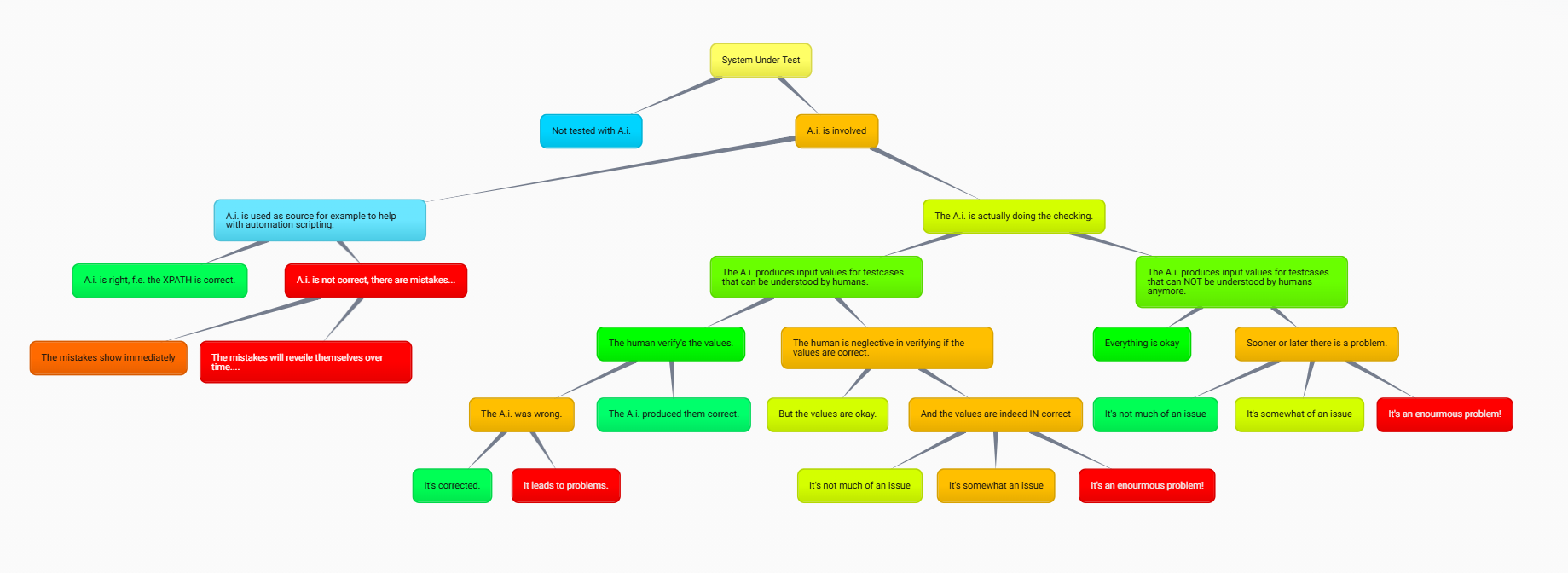

If you are using A.I. in a project, you will have to know where the risks are and how much risk is introduced by using it. Understanding the code 100% is different from letting the A.I. produce code lines while you cannot fully follow how or what it did to get it working.

So you need to log or monitor in some way what happened before a certain commit, right?

Second Part · Adjustable View

A polished comparison block with three live sliders, so you can set the score yourself and instantly show how scripted, understood, and functional the result feels.

Use the values above and plot a single point. Lower AI exposure, stronger understanding, working functionality, isolation, and smaller implementation scope push the point toward safer territory.